Food fraud quietly erodes margins, undermines consumer trust and distorts fair competition. This article unpacks why traditional controls might miss key signals of adulteration and mislabeling – and how high reproducibility NMR screening, coupled with large, continuously validated databases, has established itself as a novel rapid method for authenticity across different food products.

The problem we keep underestimating

The story of modern food is a story of global complexity: long supply chains, multiple processors and a rising mix of premium claims (single origin, monofloral, cold extracted, not from concentrate). That complexity, paired with inflationary pressure, creates incentives and opportunities for dilution, substitution and misrepresentation. Global law enforcement operations have documented the scale in stark terms: Operation OPSON routinely reports thousands of tons of counterfeit or substandard food seized and hundreds of arrests each campaign; during the COVID-19 period, seizures of expired or relabeled products spiked as criminals capitalized on supply disruption.

This is not an abstract risk confined to niche categories. Targeted actions in OPSON have repeatedly focused on honey, wine, oils, meat, fish and beverages – the very categories wherein provenance and quality attributes command price premiums. The implication for manufacturers and retailers is twofold: (1) traditional checks, sampling plans and certificates of analysis can miss sophisticated fraud; and (2) the cost of not solving these spans far beyond a single recall – think brand damage, unfair competition for honest producers and wasted sustainability efforts.

Regulators have responded. In Canada, for example, the CFIA conducted national honey authenticity surveillance using both Stable Isotope Ratio Analysis (SIRA) and NMR to detect foreign sugars, preventing non-compliant product from reaching consumers and demonstrating that dual method screening can materially improve detection.

The current toolkits and practical challenges

Today’s authenticity toolkit is broad – IR/NIR/FTIR/Raman, HPTLC, LC/GC-MS, IRMS/SIRA and NMR – each with their own strengths. In practice, surface sensitive spectroscopies are fast and deployable but can suffer from overlapping bands and matrix effects; chromatographic mass spectrometry offers exquisite sensitivity for known targets but usually at the cost of sample preparation, time and ion suppression challenges in complex matrices; IRMS is robust for C4/C3 carbon source questions and geographical clues but offers limited adulterant profiling on its own. The effects on the factory floor or in a receiving lab: latency (results arrive after the product has moved), narrow scope (targeted detection can miss new tricks) and burden (specialized preparation and calibration required).

An equally important limitation is statistical power. Authenticity is rarely about a single molecule; it is about patterns, relative ratios, minor metabolites and a product’s holistic chemical fingerprint evolving with origin, season and processing. Any method that fails to situate a sample within a large, representative distribution of authentic references risks false negatives (sophisticated frauds pass) or false positives (innocent natural variability fails). That’s why agencies like CFIA have combined methods and built multi-year datasets to baseline markets, focus inspections and escalate enforcement where risk is higher.

What NMR does differently – and why it matters operationally

Nuclear Magnetic Resonance (NMR) approaches the problem from first principles: it captures an inherently quantitative, highly reproducible spectral fingerprint of the entire sample under standardized conditions. In practice, that means a single ¹H NMR acquisition can deliver both targeted (e.g., foreign sugar resonances) and untargeted (chemometric pattern matching) insight, without fractionation and with minimal sample preparation. The power of NMR for authenticity, however, comes not just from physics – it comes from databases.

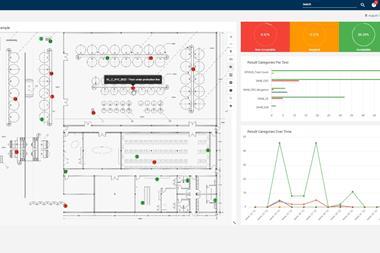

The Bruker FoodScreener implements this at scale: a lab instrument carrying an automated software pipeline that (1) enforces a simple SOP for sample prep and QC, (2) acquires spectra with high inter site reproducibility, and (3) compares each sample to large, matrix specific databases built in collaboration with leaders across the supply chain. Those databases underpin compound quantification, origin and variety classification and adulteration detection, and they are continuously expanded and revalidated via performance tests and international ring trials – so the models evolve as fraud tactics do. For honey, juice and wine, this sample to report automation standardizes decisions and reduces operator variability across sites.

Crucially, this is not just theory. Customs and border agencies have adopted NMR screening in enforcement programs precisely because it supports country of origin verification and foreign sugar detection at scale. In the United States, CBP documented the acquisition and use of NMR for honey import testing to combat transshipment/COO evasion under anti-dumping orders; in Canada, CFIA explicitly combined NMR and SIRA in national honey surveys to detect misrepresentation.

What’s novel here? Not that NMR exists – it has for decades – but that turnkey, database driven NMR has matured into a rapid, repeatable screening platform for routine authenticity control. High spectral reproducibility (even across instruments and operators), standardized QC checks, and a uniquely harmonized analysis and automated data interpretation routine transform NMR from a specialist research instrument into a factory and border ready decision tool.

How to evaluate rapid authenticity solutions (a buyer’s checklist)

When assessing rapid screening options – NMR or otherwise – procurement and QA/QC leaders can pressure test the fit with these questions:

- Process fit & matrix scope: Is the solution truly routine ready for your matrices (e.g., viscous honeys, turbid juices, polyphenol-rich red wines)? What sample preparation is required, and can it be standardized at scale?

- Measurement depth: Does it provide surface vs. volumetric insight; targeted vs. untargeted patterning; and can it quantify multiple markers in one measurement?

- Database quality: How large and representative is the reference dataset by origin, season, variety and processing? How often is it expanded and revalidated, and by whom? (Look for ring tests and cross lab QC.)

- Performance evidence: Are there independent or regulatory adoptions (e.g., CBP, CFIA) and published surveillance results showing real world detection of misrepresentation?

- Time to decision: What is the sample to report cycle time, including data transfer/analysis and operator steps? Can results be automatically routed into LIMS/ERP for release/hold workflows?

- Calibration & maintenance burden: How are QC checks (spectral quality, quantification references, temperature) enforced? Is inter site reproducibility documented?

- Data integrity & auditability: Are decision rules transparent and versioned as models evolve? Is there an immutable audit trail linking spectra, QC checks and reports?

- Total cost to outcome: Beyond capex/opex, quantify false negative/false positive costs, labor, and the opportunity cost of delayed disposition (e.g., demurrage, lost freshness window). (CFIA’s programmatic approach shows the value of targeted surveillance and rapid triage.)

- Scale & ecosystem: Is there an active community of practice (labs, control bodies and industry) contributing data and harmonizing markers/thresholds to stay ahead of evolving fraud?

What “good” looks like at scale

Leaders treating authenticity as an operational capability (not a sporadic project) tend to follow a common path:

• Phase 1 – Pilot and baseline: Select 1–2 high risk SKUs (e.g., premium honeys, high value juices). Run parallel testing (current methods + NMR) for 4–8 weeks to establish distributions and align decision rules.

• Phase 2 – SOPs & automation: Hard code sample prep and QC checks; integrate reports into LIMS/ERP and define release/hold logic. Target a single run decision for authenticity + composition to reduce latency.

• Phase 3 – Expand & federate: Add sites and stock keeping units; contribute anonymized spectra to shared databases; participate in ring tests and marker harmonization with commercial labs and partners. (This “community database” model is how the method keeps pace with evolving fraud.)

• Phase 4 – Continuous improvement: Use exception analytics to refine supplier risk scoring; collaborate with regulators to align on evidence packages, reducing retest cycles and border delays. Programs like CBP’s honey testing and CFIA’s surveillance illustrate the enforcement benefit of shared methods and data.

Why NMR based screening has established itself as a novel rapid method

Three shifts explain why NMR screening is moving from specialist to standard in authenticity control:

- Turnkey workflows: The step change is automation (sample prep → acquisition → analysis → report) and SOP driven QC, which reduce expert dependency and inter operator variability – key for multi-site food companies and government labs alike.

- Big data models: Authenticity is inherently probabilistic. Large, curated databases – 50k+ juice, 25k+ wine, ~28k honey – enable robust pattern recognition and statistically grounded decisions that better reflect natural variability. Continuous updates and ring tests keep models in lock step with the market.

- Institutional adoption: When border agencies and national regulators adopt a method, it signals practicality and evidentiary value. The CBP program on honey imports and CFIA’s dual method surveillance are emblematic of this mainstreaming.

It’s important to stress that NMR is not a silver bullet; it works best as a hub in a layered toolkit – triaging samples rapidly and sending borderline cases to complementary methods (e.g., LC-MS for targeted contaminants; IRMS for isotope ratios). That layered approach mirrors how enforcement programs deliver results in the field.

Bottom line

Food fraud isn’t a niche compliance issue; it’s a systems problem that saps value from responsible brands and puts consumers at risk. Traditional methods remain essential – but on their own they often lack the breadth, speed and statistical context today’s supply chains demand. By combining high reproducibility NMR with large, continuously validated databases and automated analysis, the industry can move from after the fact detection to real time, defensible decision making – at receiving, in QC labs and at borders.

For QA/QC leaders, the message is practical: pick one or two high risk categories, run a structured pilot and let the data tell the story. The organizations already doing this – border agencies, national regulators and leading food companies – are showing that rapid authenticity screening can be both novel and dependable, elevating trust while protecting margins.